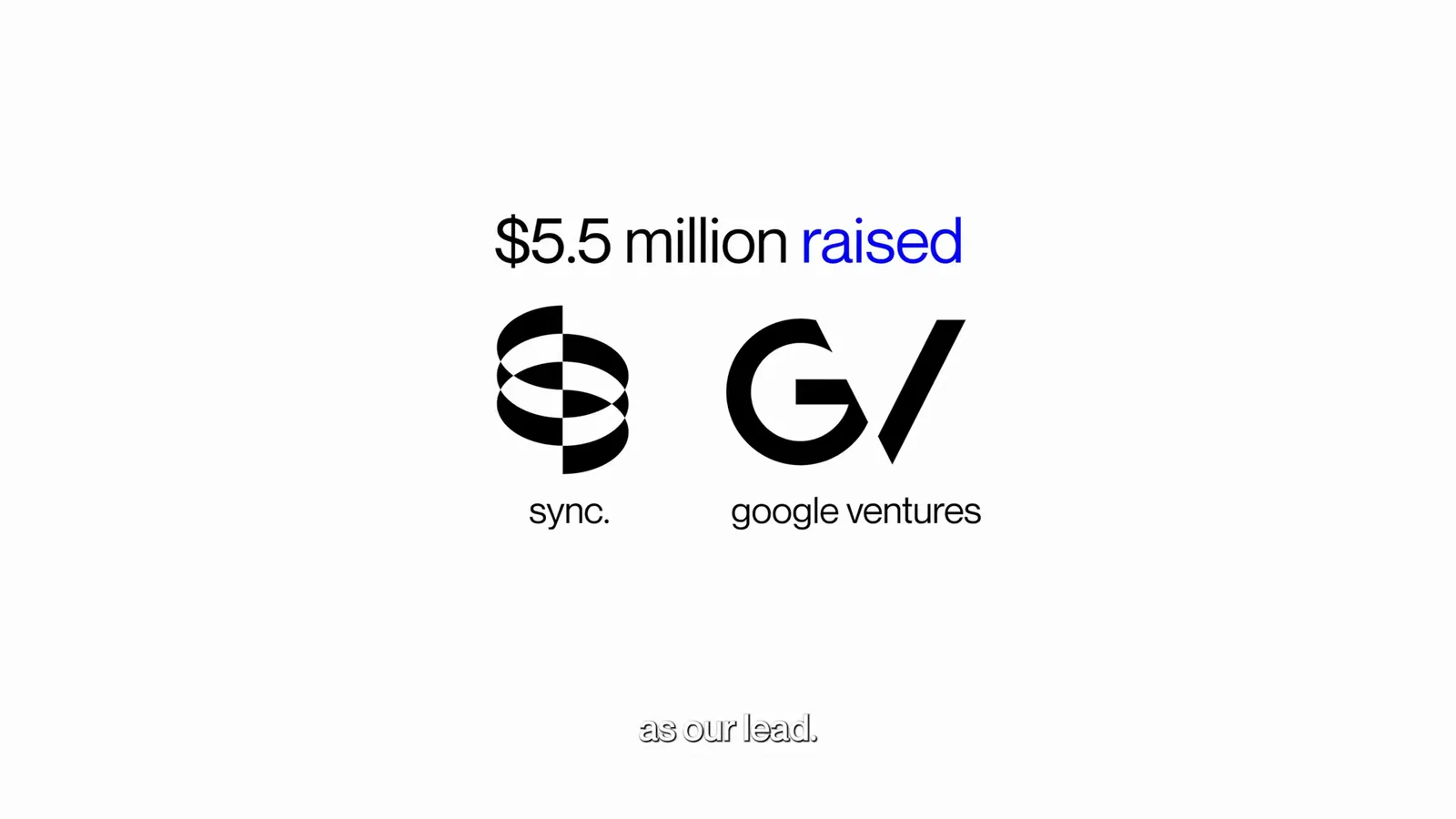

sync. announces $5.5M seed round

sync. raises $5.5M led by Google Ventures to build the AI tools that make video less camera-dependent, record once, create forever.

Video is changing slowly, then all at once.

The whole ritual of fighting a camera for the right take, multiple shots, fix it in the edit, schedule a reshoot, is being replaced by something closer to a click. Change what was said. Change how it was said. Move on.

That's the shift sync. wants to lead, by building the tools that let anyone record once and create forever. People already use sync. to skip days of manual lip editing, with our ai lip sync collapsing it into minutes. Traditional dubbing is being replaced by visual dubbing, and personalized videos that used to mean a production line now generate in the millions, in hours.

Where this goes is bigger. You should be able to modify the humans in a video without a reshoot ever again, not just the words, but the delivery, the emotion, the body language. That is the long bet, and we're a long way from done.

Today we're announcing our seed round, and pausing for a second to look back at how we got here.

It started as an open-source project, Wav2Lip, out of the Computer Vision lab at IIIT Hyderabad in 2020. First AI lipsync tool of its kind, currently sitting at 10k+ stars on GitHub. We got into YCombinator's Winter '24 batch, ended up one of the fastest-growing companies in the cohort, and just closed our $5.5M seed.

Strange run.

The early team is the part I'm most proud of. Sharp ML engineers and computer vision researchers from labs under Prof. Andrew Zisserman and Prof. Jawahar, people who have already shaped this field. Together we can ship state-of-the-art vision models and serve them at billion-user scale.

The round was led by Google Ventures, with participation from YCombinator, Creator Ventures, Rebel Fund, and a number of other investors we're lucky to have on the cap table.

If the future of video sounds like something you want to build, come work with us.